The engineering behind making AI voices sound right, every time.

The Challenge

Parkbench generates audio clips every day: meditation guides, sleep aids, conversations, and long-form narrations; all using AI voice synthesis.

Unlike text, where a missing word is immediately visible, audio failures are subtle. A listener might hear a sentence that trails off mid-thought, a word that sounds slightly swallowed, or a pause that feels unnatural.

The core technology, NeuTTS Air, is an autoregressive transformer that generates audio token by token. Like all autoregressive models, it has a finite context window (~2048 tokens, roughly 30 seconds of audio). Push it too far and it doesn’t crash, it simply stops generating.

The audio file looks normal. It plays normally. But the last sentence is just… gone.

This is the worst kind of bug: intermittent, silent, and invisible to automated monitoring.

The Problem We Discovered

A user reported that a generated voice clip had its last word “slightly cut off.”

- Input: 36 words (201 characters)

- Output: 13 seconds of audio (seemingly reasonable)

But on inspection, the last 8 words were completely missing.

The model generated natural-sounding speech for the first 28 words, with proper pacing, intonation, and pauses, then quietly stopped.

Our duration-based detector (which flags audio that’s “too short” for its word count) saw:

13 seconds for 36 words → “Looks fine”

It wasn’t fine.

22% of the content was missing.

Why Simple Checks Don’t Work

Our first instinct was a duration heuristic:

If audio is shorter than expected → it’s probably truncated

This works for obvious failures, but it fundamentally cannot distinguish between:

- A fast speaker who said everything (valid)

- A normal speaker who stopped early (broken)

Speech rate varies:

- Meditation → slow

- Conversations → faster

- Voices → naturally different

A single threshold either:

- Misses real truncation, or

- Flags valid audio (wasting compute)

We tried tightening it. It caught more issues, but also increased false positives.

The Insight: Ask the Audio What It Said

Instead of guessing based on duration…

Why not just listen to the audio?

We already use Whisper (OpenAI’s open-source speech recognition model) running internally.

- ~75MB model

- Runs on CPU

- Transcribes ~15s audio in 1–3 seconds

So we flipped the problem:

- Generate audio

- Transcribe it back to text

- Compare with the original

- Regenerate if needed

This isn’t heuristic. It’s content verification.

How It Works in Practice

Two-Tier Detection

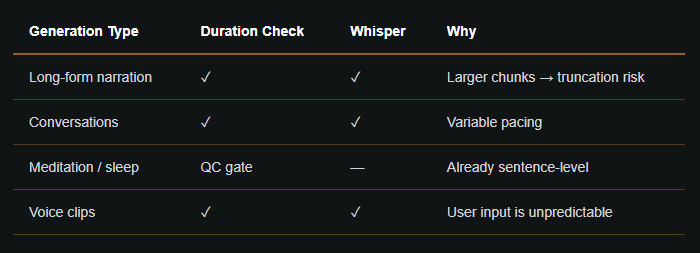

We kept the duration check (it’s free and instant), and added Whisper as a second layer.

Generate audio

→ Duration check (instant)

→ Too short? → Regenerate sentence-by-sentence

→ Looks OK? → Whisper transcription (~1–3s)

→ Words missing? → Regenerate sentence-by-sentence

→ All words present? → Accept audio ✓

Fuzzy Text Matching

Whisper isn’t perfect, so we don’t require exact matches.

We:

- Normalize text (lowercase, remove punctuation)

- Compare words in order using fuzzy matching

- Require ≥85% word coverage

- Explicitly verify the last 3 words

This avoids false positives while reliably catching truncation.

Progressive Fallback

When truncation is detected, we don’t retry blindly, we reduce input size:

- Full chunk (initial attempt)

- Sentence-level generation

- Clause-level splitting (commas, semicolons)

Shorter inputs = safer generation.

Coverage

The Privacy Angle

Everything runs locally.

- Whisper runs on the same CPU pod

- No audio leaves our infrastructure

- No external APIs

This was non-negotiable.

Performance Impact

- Whisper adds 1–3 seconds per chunk

- Typical generation: 2+ minutes → negligible overhead

Fallback regeneration is slower, but only triggered when there’s a real issue.

Correct output on first delivery is worth the cost.

What We Learned

- Heuristics aren’t enough: They catch obvious issues, but not edge cases.

- Reuse existing tools: Whisper was already deployed. This was low effort, high impact.

- Intermittent bugs need deterministic solutions: Verification eliminates entire bug classes.

- Layer defenses: Duration → Whisper → fallback

- Make it toggleable: Feature flags allow instant rollback.

This pattern: generate → verify → regenerate extends naturally:

- Pronunciation validation

- Emotional tone matching

- Multi-speaker consistency

- Music/voice balance

The idea is simple:

Use AI to check AI before humans ever notice.

The Cold-Start Problem

Users reported clipped or garbled first words.

Cause: autoregressive models start with a “cold” hidden state.

Fix: warm-up generation

- Generate a throwaway ~17-word phrase

- Discard it

- Then generate real content

Result: clean starts, no artifacts.

When Fallbacks Fail

A 39-word sentence kept truncating, even after fallback.

Root issue:

- Clause splitting worked

- But recombination logic merged it back

- System returned “unsplittable” → kept bad audio

The Fix

1. Escalation logic

- If 40-word split fails → force 20-word split

2. Missing-tail append

If truncation persists:

- Extract missing tail words

- Generate them separately

- Append with ~50ms silence

- Re-verify with Whisper

The Full Fallback Chain

Generate full chunk

→ Duration + Whisper

→ Truncated? → Sentence split

→ Per sentence: Duration + Whisper

→ Truncated? → Clause split (40 words)

→ Can't split? → Fine split (20 words)

→ Still truncated? → Append missing tail

→ Final Whisper verification

Head + Tail Verification

We now check both ends:

- First 3 words (head)

- Last 3 words (tail)

Both must pass.

Whisper at Every Level

Whisper now runs at:

- Chunk

- Sentence

- Clause

- Final output

This gives precise diagnostics, not just final failure detection.

Full Transcription Logging

We log:

- Original text

- Whisper output

Used for:

- Debugging

- Monitoring word coverage trends

What This Taught Us

- Fallbacks must be tested end-to-end

- Detection without correction is useless

- Surgical fixes beat brute force

- Autoregressive models need priming

- Verification must happen at every layer

Parkbench generates personalized AI audio including meditation, sleep, conversations, and narration.

Everything runs locally, using open-source models.

No user data ever leaves our infrastructure.